FAAAB: Find All Auctions And Bid

Adam Cheyer, Luc Julia, Didier Guzzoni

August 23, 1998

Problem Statement

Online auctions are becoming more and more popular on the Internet – more than one hundred web sites exist simply to help buyers and sellers exchange goods through auction-based mechanisms. Although auction meta-sites are beginning to emerge (e.g. www.bidfind.com), these sites provide only search engine capabilities. Simply finding an auction site to participate in, although useful, is not enough: managing the auction process is a time-consuming effort.

The proposed effort investigates the feasibility of applying agent technology to an electronic auction domain. Automated software agents would be responsible for providing the following capabilities to users of the service:

1. Find online auctions selling products the user is interested in.

2. Find the best market price available from commercial vendors for these products – this gives the user a baseline value against which to compare the value of an auctioned item.

3. Monitor relevant auction sites, acquiring knowledge about the patterns of buying and selling at that site (e.g., how often does a user truly find a bargain at a particular site).

4. Manage a collection of automated bidding agents who cooperate to achieve the user’s objectives in obtaining products at a good price.

The ideas outlined here have been implemented in a prototype system called FAAAB, which demonstrates (and allows experimentation with) many of the facets required for accomplishing the vision.

Requirements

To implement auction finding and bidding service, at least the following major components will be required:

· web crawlers and information extractors to retrieve information about and from auction sites;

· a user interface to enable users to task and control monitoring and bidding agents;

· and a sophisticated agent scheduler to efficiently coordinate the efforts across auction agents.

This section outlines basic requirements and for each.

Crawlers and Extractors

There are at least two types of web data involved in our auction process: data which is highly dynamic in nature (e.g., an ongoing auction changes frequently as new bidders take action), and data which is more stable (e.g., the structure of a given auction site, or the set of auction sites themselves). The more static data can be efficiently cached by web-crawlers which refresh the cache every day or two, while the more dynamic data must be retrieved on demand from the source web page (by extractors). Crawlers generally have a database associated with them which caches their findings.

Except for when the information to be extracted is generic in nature, such as a Url or email finder, or a keyword-based search index, knowledge will need to be generated about the format of the information to extract from a particular web site. Since most of the development time associated with this effort is related to encoding this knowledge, having the right tools and languages with which to do so is essential.

Domain-specific knowledge encodes two types of information : how to navigate web sites (e.g., go to URL X, find a button labeled Y and click on it, and then fill out a form at the resulting page with the specified information); and how to extract information found at the site. Encoding languages should be able to represent both sorts of knowledge in as readable and concise a form as possible.

It is desirable that the tools that interpret or execute the domain-specific knowledge have the following properties:

· Multi-threaded (or multi-tasking) to be able to manage many knowledge-extraction requests simultaneously

· Replicate-able and/or mobile, so that new instances can be created and distributed according to load requirements

· Able to communicate with other components of the system, such as databases for caching information, user interfaces, etc.

User Interface

The User Interface (UI) to the target system should have the following properties:

· Be implemented in pure Java (with JFC Swing graphical components) to be portable and accessible from any Java-enabled web browser.

· Be rich enough to visually express a complex space of information: many agents will bid and monitor at auctions with changing prices, varied closing dates, etc. A user should have a global understanding of the current status of an entire multi-agent auction portfolio, and the ability to modify or control any aspect of the process.

· Intermittent operation: a user should be able to disconnect and reconnect at will.

· Lightweight: Additional low-profile UIs (e.g., banners) can update the user of portfolio events without requiring connection to the full user interface or focused attention by the user.

Agent Scheduler

An agent-scheduler must be imbued with the ability to efficiently manage and schedule information retrieval tasks for the auction monitoring and bidding agents. The scheduler will make decisions about when a bid should be considered based on user-definable “aggressiveness” parameters, the amount of time remaining until the auction closes, how important a particular auction is based on a prediction of potential success, etc.

Implementation

The ideas and requirements have, for the most part, been implemented in a prototype system called FAAAB (for “Find All Auctions And Bid”). Features and issues not yet accomplished by this prototype are discussed in the next section.

Integration Framework

From our requirements section, it is clear that the implemented system must use a client/server model, with client user interfaces providing monitoring and control of a server side that provides the finding, extraction, and bidding services of the system.

The Open Agent ArchitectureTM (OAATM) was selected as the integration framework for FAAAB, as the OAA enables rapid development of both Java-based client user interfaces and complex server applications made out of distributed components.

Crawlers and Extractors

In the requirements section, we spoke of the need for both tools and languages for expressing domain-specific extraction and navigational logic. After evaluating several in-house (DIFF-parse, DCG-parse, plus web agents) and commercial (AgentSoft’s LiveAgent Pro [2]), we chose Digital’s WebL product [3] as the best tool and language for our needs. Implemented in Java, WebL provides powerful features (parallelism concepts, markup algrebra combining query sets over regular expressions and structured HTML and XML representations, specialized web-related exception handling, and so forth). In addition, source code is provided for free, allowing us to easily incorporate the technology as an OAA agent, and to make extensions to it as necessary (e.g., add mobility).

The WebLOAA agent provides a generic OAA-enabled tool which can dynamically load knowledge scripts encapsulating a particular web site or service; each script becomes an agent in the OAA sense. Scripts can serve both as extractors, and when used in conjunction with an OAA database agent, crawlers which cache their results for fast retrieval.

Auction Finders

The first task of the FAAAB prototype requests a user to input a description of a product that they are interested in, and then attempts to find auctions which are selling comparable products. This task was accomplished in two ways :

1. we created an extractor agent for an existing auction search site, BidFind.com [4];

2. we created a web crawler for a site not currently indexed by BidFind (www.webauction.com [5]) to demonstrate that we need not be reliant on the BidFind service.

Both the WebAuction agent and the BidFind agent were rapidly implemented as WebL scripts managed by the WebLOAA agent. See Appendix A for source code of the BidFind extractor agent, to get a sense of the power and elegance of the WebL language for web wrapping and extraction tasks.

Market Price Finder

Once a list of interesting auctions have been returned and displayed to the user, he or she should choose which sites are to be managed by FAAAB auction agents. For each auction returned, the user may visit the website or may request a search for the real market price of the auctioned object. Note that even though most auctions returned for a given search will offer relatively similar products, the products may have varying brands, optional features, and so forth, so it might be desirable to find the market price for each individual auction and not just for the group.

Even though product search engines are starting to appear (e.g., Jango [6], Junglee [7]), finding a good guess for the real market price of an object given only its description is not an easy task. Here is the approach that we are using for the moment:

Given a description of an object for sale at an auction, we first try to guess the major category (e.g., desktop computer, camera, flowers, etc.) for the product. Jango, the best product finder currently available, uses a yahoo-like hierarchical category scheme, with pulldown menus for different choices. For instance, if looking for a laptop computer, you choose this category and then select criteria such as brand, model and processor speed from a preselected list. These criteria, both headings and values, are different for each category.

To guess the product category, we wrote a WebLOAA crawler that traverses all of the categories from Jango and pulls out the criteria and values for each category. Then for the given object description, we choose its category by taking the one which has the highest number of values present in the object description. The values are augmented by a hand-coded synonym list to increase the likelihood of positive matches. Note: a future enhancement would be to automatically generate pertinent keywords from the corpus of category items using statistical methods.

Once the major category has been determined, the findMarketPrice agent tries to fill out the search form for that category with relevant criteria taken from the target description. Resulting descriptions are then compared against the target description for similarity, and the price, description and URL of the best guess are returned to the user interface for display to the user. Note: this step is still under development, and in the meantime, a simple price-by-category result is returned as the answer.

Agent Scheduler

The agent scheduler, implemented in Prolog, is responsible for efficiently managing update requests for an entire community of auction bidding and monitoring agents and for webcrawler agents. As auction agents are created or modified, the agent scheduler plans future checkup times for the site based on:

· Closing date: scheduled checkup times are proportional to the amount of time remaining until the auction closes. If the auction closes in a week from now, it doesn’t make sense to check the auction page every minute. However as the deadline approaches, more frequent checks are necessary.

· Auction importance: some auctions are more desirable than others for a variety of reasons. For instance, if one site has 500 copies of a product to sell and another site only has one, placing a winning bid at the first site is much more interesting because 500 other users will need to bid higher before your bid is surpassed.

· Users might also indicate a preference for a particular auction object over another.

· Agressiveness parameter: a user can tailor an aggresiveness parameter which influences how often an auction agent bids.

· Real-world notifications: some auctions send an email when someone has outbid you, and an email agent could reschedule an immediate counter-bid (not yet implemented).

User Interface

A user interface, implemented in Java and JFC, provides monitoring and control of FAAAB services from any web browser.

In the setup phase of FAAAB (Figure 1), users find and evaluate potential auctions of interest, and then create auction agents to monitor and bid on these auctions. The Setup tab of the user interface retrieves auction information from the BidFind and WebAuction crawlers (and any other dynamically available finders). Users may then choose to view the original web page featuring the auction or to search for the best online market price for the product offered by that specific auction. Note that multiple “tabs” can be created, each representing a group of agents (currently limited to 10 per group) acting upon auctions in a given “domain” (e.g., pentium computers, cameras, sunglasses, etc.)

Figure 1. Creating auction agents for “pentium” computers

For each domain tab created, a user can graphically view progress and results of auction agents (Figure 2). Agents are classified either as monitor agents, who simply record progress of a particular auction, or bidding agents, who autonomously make bids according to user instructions. The market price (actually, the highest market price for all auction products) and the (highest) max bid are displayed on a gauge. As the auctions unfold, the agents graphically move up the meter, displaying their current prices. Agents who have surpassed their max bid are colored with a red background.

An agent editor enables the user to tailor various properties of the auction agent, such as max bid and aggressiveness. Additional information is also available, such as the bidding history to the current moment, market price for the product, etc.

Figure 2. Bidding and monitoring agents for “pentium” auctions

Alternate interfaces are also possible. Figure 3 displays a lightweight “banner” interface which inobtrusively keeps the user informed as to updates by his or her auction agents.

![]()

Figure 3. A lightweight banner

interface displaying updates

The Big Picture

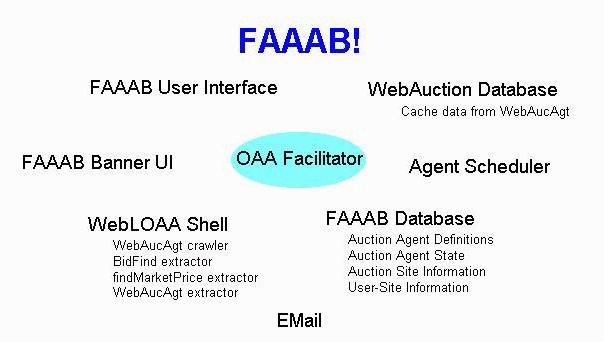

Figure 4 illustrates the architectural layout of the FAAAB components within the OAA. This section details a few notes on information flow and data storage choices implemented in the prototype.

Figure 4. Architecture of FAAAB Prototype

The main FAAAB user interface is accessed from a web browser. Typically, a user will begin by issuing searches for auctions selling interesting products. The results of these searches come from cached data stored in the WebAuction database, and recalculated-on-demand data retrieved by the BidFind extractor agent. WebCrawler caches updates are managed by the Agent Scheduler.

For a given auction found by the above process, the user can query market price information about its products using the findMarketPrice extractor, and view full information about the auction using the UI browser.

A user then selects a subset of auctions to monitor using FAAAB agents -- information about each auction agent is stored in the FAAAB database. A user can edit and tailor agent specific information using the editor provided by the UI.

The Agent Scheduler is notified through OAA’s trigger mechanisms of new or modified auction agent definitions. For each auction agent, the scheduler generates a monitoring plan based on auction closing date, importance of the auction site, user-tailorable agressiveness parameters, etc.

When an auction agent “checkup” time arrives, the scheduler sends a request for an extractor to read the auction site and retrieve all information about it. If a bid should be made according to the optimal bidding strategies for the user’s portfolio, a request is made to available bidding wrapper agents. The results of monitoring and bidding are written to the AucAgtState predicates in the FAAAB database.

The FAAAB user interface and banner user interface both receive update notifications about change in agent state, and display the results accordingly.

An email agent can be used for sending final reports about history and results when an auction closes, and for detecting real-world notifications that another user has outbid you.

Note: the system is extensible and can operate in disconnected mode. As new bidding and monitoring extractor and crawler agents are dynamically added to the system, they will automatically be integrated into the FAAAB process. Disconnected operation is available because both the user interface agents and agent scheduler store all state information in persistent databases and reload this information upon connection at a later time.

Conclusions & Future Work

The FAAAB prototype illustrates that the construction of an automated meta-auction site management service is a feasible endeavor. The key contributions of the effort are:

· Selection of a representation language (WebL) for encoding navigational and extraction knowledge for web sites.

· Design and implementation of multiple user interfaces that enable ubiquitous, disconnected access and control to the auction agents.

· Design and implementation of bidding and monitoring strategies and scheduling.

· Integration within a flexible architecture that facilitates light client UIs and complex, distributed, extensible server implementations.

Concerns raised by the prototype are:

· Scaleability: The WebL language, although extremely powerful, may not be “industrial-strength” enough for a large commercial application. Other “shortcuts” were used, such as a Prolog database agent instead of something like Oracle. Also OAA as an infrastructure, while highly suitable for fast development of complex systems, would not, in its present form, be suitable for large number of transactions.

Although much work was accomplished in the 1 week spent on

this effort so far, the system can still be improved considerably. Here are several remaining extensions and

improvements.

·

Many more crawlers and extractors can be written to

encapsulate additional auction resources.

· The current version of the WebLOAA agent serializes incoming requests for information extraction. A future version should use the parallelism constructs inherent in Java and WebL to handle multiple requests at once. This is a high priority task for efficiency reasons!

·

FindMarketPrice: the hand-coded synonym dictionary

could be replaced by automatically-generated keywords gleaned from sample items

for each category. This would most

likely greatly improve this agent’s ability to make good price guesses.

· Improved agent scheduler:

· Optimizes information retrieval queries across agents

· Better bidding strategies

· Integration with OAA’s ubiquitous access system to provide over-the-phone monitoring and control.

Questions & notes for investigation:

· What happens if many people use the automated auction-based service? The bargains no longer slip through the cracks…

·

Not all sellers are equivalent – agents should do

background checks on sellers and allow user to contact them directly over the

phone…

Related Work & Resources

1. MIT

Media Lab’s KASBAH experiment: multi-agent implementation of a commercial

marketplace, where both buyers and sellers are represented by agents. What we can learn: parameters and algorithms

for automated buying agents.

http://ecommerce.media.mit.edu/Kasbah/

2. AgentSoft’s

LiveAgent Pro: A scripting language for automating the web. Semi-automatic generation of scripts through

construction through example.

Cumbersome to use…

http://www.agentsoft.com/

3. Digital/Compaq’s

WebL language: A scripting language implemented in Java which contains powerful

“Markup Algebra” and exception handling features. Free!

http://www.research.digital.com/SRC/WebL

4. BidFinder:

An auction meta-site search engine, allowing users to find current auctions for

products (from keywords).

http://www.bidfinder.com/

5. WebAuction: One of the most popular and large online

auction sites.

http://www.webauction.com/

6. Jango: Bought by Excite, the premier product

finder on the web. Spinoff from University

of Washington (Etzioni & Weld).

http://www.jango.com/

7. Junglee:

Similar to Jango but currently only for resume selling/buying.

http://www.junglee.com/

Appendix A: BidFind Extractor Agent

//

// WebL extractor agent to query the BidFind search engine

// and return results in a useful form

//

// Responds to OAA queries of the form:

// find(auctions,

SearchString, Parameters, Results)

//

// Results are of the form:

// [ auction(SiteName, AuctionId, AucDescList),

auction()... ]

//

// AucDescList has the form:

// [

site(SiteName), desc(TextDescOfProduct), price(P),

// url(SpecificUrl),

date(ClosingDate) ]

//

// Copyright 1998 by SRI International

import Files, Str, OAA;

// OAA solvables for extractor agent

export var Solvables = "[find(auctions, Query, Params,

Result)]";

// Query the bidfind site for a search string and return

Results

export var CurrentAuctions = fun(query, params)

var q =

OAA_undoubleQuotes(OAA_removeQuotes(query));

var res =

"[";

var P;

try

P =

Timeout(60000, Retry(

PostURL("http://www.bidfind.com/cgi-bin/af1.pl",

[. sb="y",st="1",id=q

.])));

catch E

on true do

return

"[]" // Failure if can’t

load page

end;

// Search using a

sequence pattern

var H = Seq(P,

"strong # a # font");

// Iterate over

all auctions found and construct result

every h in H do

var A =

Str_Split(Text(h[4]),"()");

var elt;

if (Size(A)

> 1) then

elt =

"auction('" +Str_Trim(A[1])+"',id," +

[desc('"+OAA_doubleQuotes(Str_Trim(Text(h[2])))+"'),url('"+

Str_Trim(h[2].href)+"'),price('"+

Str_Trim(A[0])+"'),site('"+

Str_Trim(A[1])+"')])"

else

elt =

"auction(unknown_site, id," +

[desc('"+OAA_doubleQuotes(Str_Trim(Text(h[2])))+"'),url('"+

Str_Trim(h[2].href)+"'),price('"+

Str_Trim(A[0])+"')])"

end;

// Add separator commas

if (res !=

"[") then

res =

res + ","

end;

res = res +

elt;

end;

res = res +

"]";

return "[find(auctions,"

+ query + "," + params + ","+ res + ")]";

end;

// Main OAA event callback

export var DoEvent = fun(func, args)

if (func ==

"find") then

var argList =

OAA_stringListToWebLList(args);

return

CurrentAuctions(argList[1], argList[2]);

else

return

"[]"

end

end;